The Invisible Menu - Part 3: When Good Protocols Go Bad

Part 3 of the Invisible Menu blog series.

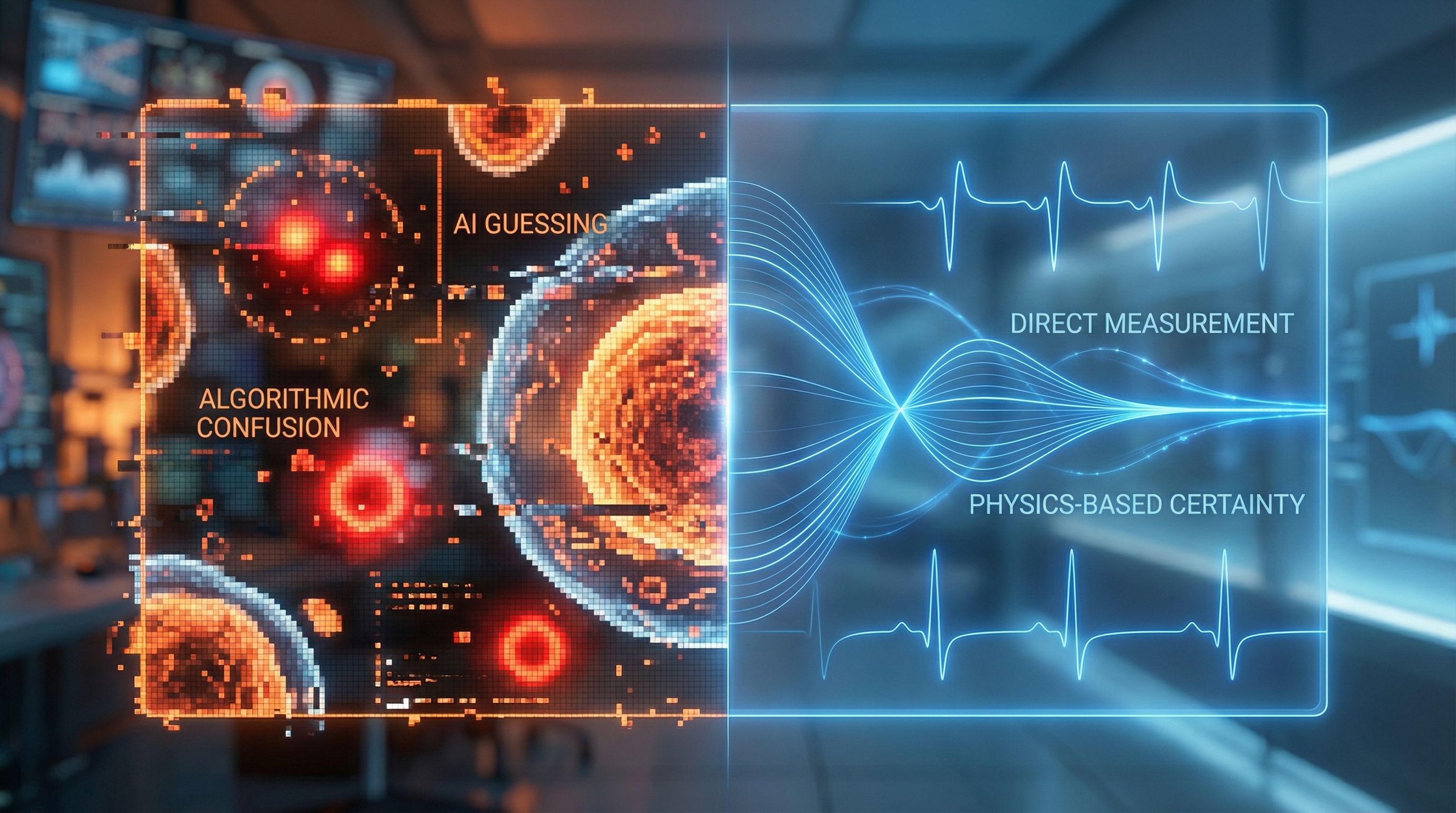

The chef stands before two kitchens. In one, cameras photograph every ingredient, and algorithms guess what's edible. In the other, a single principle—the physics of matter itself—separates food from contamination with absolute certainty.

For two episodes, you've met the villains contaminating your samples. The Invisible Contaminant. The Ambient RNA Soup. The Segmentation Failure. The Compounding Error. The QC Blind Spot. Every one lurking in your data, invisible to image-based counting.

The imaging industry has one answer: better algorithms. Smarter AI. More training data.

They're solving the wrong problem.

The Algorithm's Inherent Limitation

Here's what the imaging industry doesn't advertise: AI segmentation algorithms fail when they encounter samples they weren't trained on. Debris patterns vary infinitely. Every tissue type, every preparation method, every cell line creates unique contamination signatures that no training dataset can anticipate.

Even in ideal conditions—perfect focus, optimal lighting, textbook cell morphology—image-based counting carries 3-4% error per image. Not because the technology is flawed. Because the approach is fundamentally limited.

The Fundamental Problem

Algorithms interpret. They make educated guesses based on patterns they've seen before. When a new pattern appears—and in biological samples, new patterns always appear—the algorithm reverts to its best guess. A guess contaminated by every villain on the menu.

The Physics Principle That Changes Everything

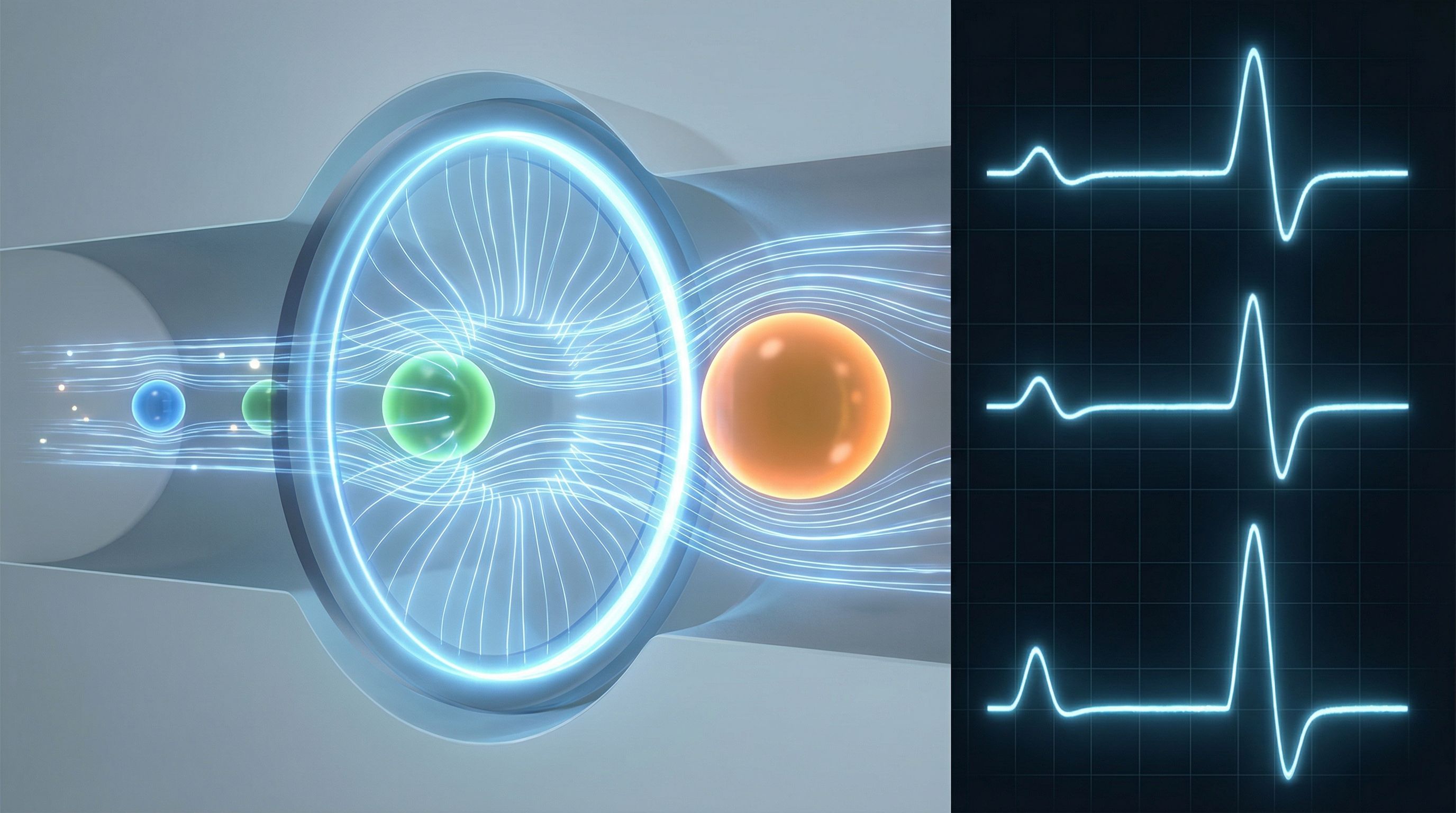

In 1953, Wallace Coulter patented a principle that would transform hematology: when a particle passes through an electrical field, it creates a measurable resistance change proportional to its volume. Not an interpretation. Not a guess. A direct physical measurement.

This is the Coulter principle. And it defeats every villain on your menu.

How Physics Defeats the Villains

The Invisible Contaminant? Physics sees it. Every particle—cell or debris—creates an impedance signature. Nothing hides.

The Segmentation Failure? Physics doesn't segment. It measures. There's no algorithm to confuse, no training data to fail.

The Compounding Error? When you measure actual particle volume, there's nothing to compound. Size is size. The physics doesn't lie.

The Ambient RNA Soup? Physics quantifies debris percentage before you commit expensive reagents. The soup becomes visible.

The QC Blind Spot? Physics enables objective thresholds. Pass/fail criteria based on measurement, not interpretation.

Direct Measurement vs. Algorithmic Interpretation

Consider what happens when debris enters each system:

Image-based approach: Camera captures image. Algorithm processes pixels. Neural network compares to training data. Best guess emerges. Debris excluded from count—but never quantified. Contamination remains invisible.

Physics-based approach: Particle enters electrical field. Impedance changes proportionally to volume. Size recorded directly. Debris appears as distinct population. Contamination percentage calculated instantly.

The Critical Distinction

The difference isn't incremental improvement. It's a fundamentally different relationship with truth. One approach asks: "What does this look like compared to what I've seen before?" The other asks: "What is the physical size of this particle?" Only one question has an absolute answer.

Why the Industry Resists This Truth

Image-based counters dominate the market. Their manufacturers have invested billions in AI development, camera technology, and segmentation algorithms. Acknowledging that physics-based detection solves problems their algorithms cannot would undermine entire product lines.

So the marketing continues: "Our AI is trained on millions of images." "Our algorithms achieve 99% accuracy." "Our segmentation handles debris automatically."

None of these claims address the fundamental limitation: algorithms interpret. Physics measures. Interpretation can be wrong. Measurement cannot.

The villains on your menu don't care about marketing claims. They care about physics. And physics is the only language they fear.

The Menu, Rewritten

Imagine ordering at a restaurant where the chef doesn't guess what's in your dish—they weigh every ingredient on a precision scale. No interpretation. No estimation. Just measurement.

That's what physics-based impedance detection offers your sample QC workflow:

- Every particle measured—cells and debris alike, with their actual physical size

- Debris percentage quantified—not excluded and forgotten, but visible and actionable

- Contamination thresholds set—objective pass/fail criteria based on real measurements

- Standardized QC checkpoints—reproducible across users, timepoints, and samples

The invisible menu becomes visible. The villains become quantified. The guessing ends.

Key Takeaway

Better AI won't save your data. More training images won't expose the contaminants. Faster cameras won't quantify your debris. Physics will. The Coulter principle—the same physics that transformed clinical hematology—offers research laboratories what imaging never can: direct measurement of what's actually in your sample.

In Part 4 of The Invisible Menu, we'll reveal the recipe—how physics-based detection transforms debris quantification from impossible to instantaneous. The workflow that exposes every villain. The QC checkpoint that should have existed all along.

Trust Physics, Not Pixels

Discover how impedance-based detection quantifies what image counters miss—every particle, every time.